The Fascination with AI-Powered Content Creation: Why People Take Risks

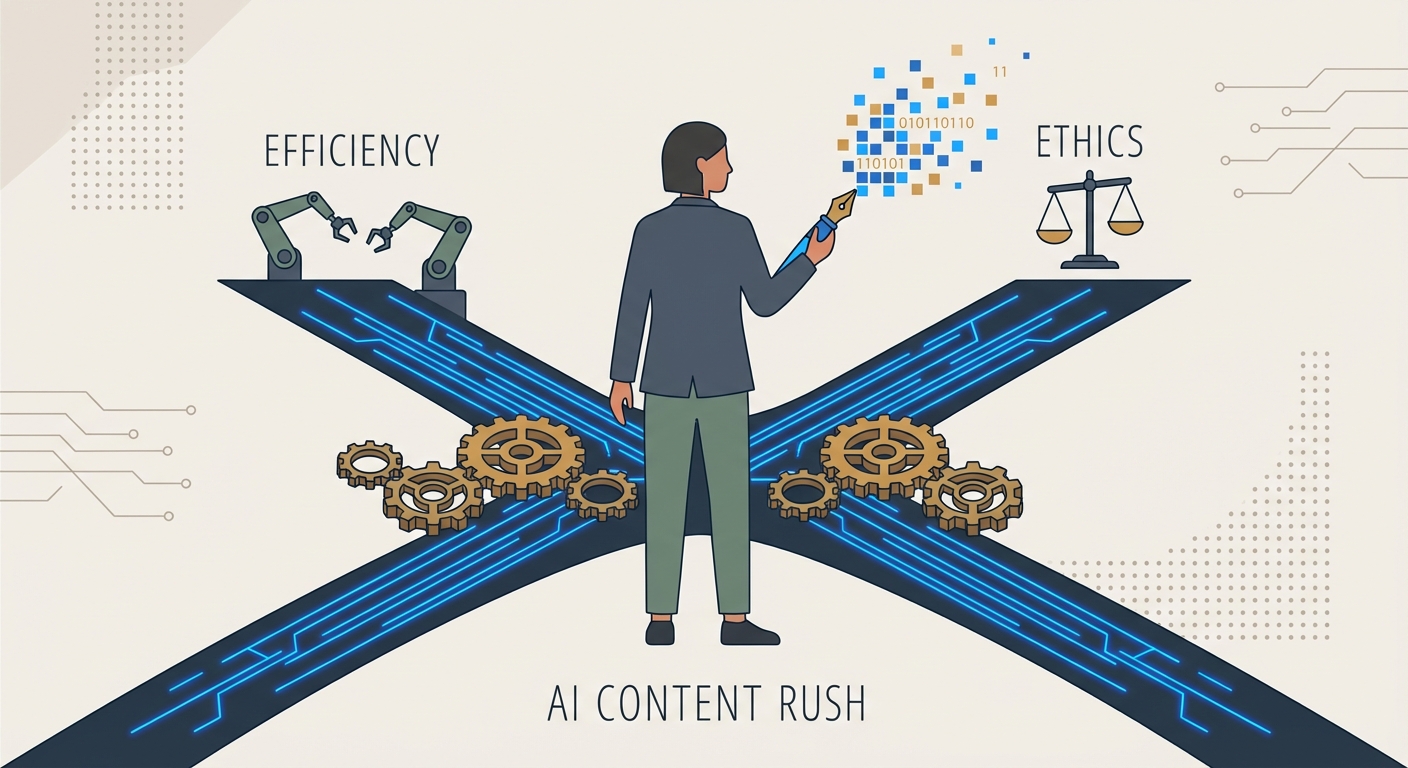

It’s 2026, and we’re knee-deep in a digital Gold Rush. You see it everywhere: that frantic scramble to pump out content at a pace no human could ever sustain. The friction between wanting to be hyper-efficient and needing to stay ethical has become the defining headache of our professional lives.

Let’s be real for a second. Nobody is using AI because they’re oblivious to the risks. They’re doing it because that "near-instant output" siren song is impossible to ignore. We live in an economy that demands a relentless, high-volume firehose of content. We want to transcend our human limits—to be faster, sharper, and more productive. But here’s the kicker: the price of that transcendence is usually the very trust we’ve spent years building.

Why Are We So Drawn to the AI Creative Loop?

The pull of the AI creative loop isn’t just about the tech. It’s psychological. Think about the "blank page syndrome." It’s painful. It’s the internal critic that says, “This isn’t good enough,” or “You’re out of ideas.”

AI kills that critic. It’s the ultimate sedative for the anxious creative. When you offload the heavy lifting to a machine, you aren't just saving time; you’re silencing that voice of doubt. But this creates a dangerous feedback loop. The more you lean on the machine to bridge the gap between a messy, vague thought and a polished final draft, the less you trust your own brain to do the heavy lifting.

Then, there’s the "Attachment Paradox." We’re wired to anthropomorphize everything. When a chatbot spits out a witty observation or a perfectly structured argument, it feels like a genuine collaboration. We start treating the model like a peer. We lower our guard. We start trusting it. But as Harvard Business Review’s insights on psychological safety point out, that’s where the rot sets in. When you start treating an LLM as a teammate rather than a tool, the human in the room stops taking responsibility. And that, in a nutshell, is where the professional danger starts.

What Does the 2026 Risk Landscape Actually Look Like?

The "wow, look at this cool tech" phase is long dead. We are now living in the era of indistinguishable deception.

Audiences have wised up. They aren't looking for the glitchy fingers or the weird, robotic syntax anymore. They’re just suspicious of everything. The burden of proof has shifted entirely onto you. Every time your brand drops a piece of content that looks a little too "perfectly generated," you’re playing a losing game against an audience that is actively hunting for the seams.

And then there’s the bias problem. It’s not just a theory anymore; it’s a systemic risk. AI models are trained on the unfiltered, chaotic, and often prejudiced noise of the internet. If you aren’t auditing your outputs, you aren’t just creating content—you’re scaling old-school biases and handing them a megaphone. That’s a fast track to a PR disaster you can’t easily walk back.

Why Is "Innovation" No Longer a Valid Defense?

Remember "move fast and break things"? In 2026, that’s not a mantra—it’s a lawsuit waiting to happen. As the legal walls close in around intellectual property and digital misinformation, hiding behind the "innovation" excuse is a losing strategy. As this analysis of AI risk in 2026 makes clear, the courts have zero patience for "the algorithm did it."

Governance is the new competitive advantage. Our own Responsible AI Policy is built on this simple truth: innovation is only worth the trouble if it’s sustainable. And it’s only sustainable if a human is steering the ship. You cannot outsource your reputation to a model that doesn’t understand what it means to lose one. When you adopt synthetic tools, your job description changes overnight. You’re no longer just a creator; you’re an editor-in-chief and a forensic auditor.

How Do We Build a Human-in-the-Loop Governance Framework?

Stop treating human intervention like a bottleneck. It’s the actual value proposition.

The internet is drowning in AI-generated slop. It’s generic, it’s soulless, and it’s everywhere. The "Authenticity Premium" is going to belong to the brands that can prove a human actually thought about, checked, and crafted the message.

Our pipeline is simple: if it hasn’t been audited, it doesn’t exist. The AI does the heavy lifting on the draft, but a subject matter expert—a living, breathing person—must verify every single claim and tone check.

Is Your Team Prepared for the Future of Content Authenticity?

This shift is a culture war, not just a software update. If your team is crushed under the pressure of quotas, they will cut corners. That’s where the risk crystallizes. Leaders need to make "human-in-the-loop" a core requirement of the job, not just a line item in a slide deck.

We’ve seen this play out in various Case Studies in Content Strategy where the winners were the ones who treated AI like a smart, but occasionally unreliable, junior intern. You let the intern do the research, but you never let them sign off on the final report. For those looking at the bigger picture, the International AI Safety Report 2026 offers a sobering look at how regulators are finally catching up. The organizations that thrive from here on out will be the ones that treat authenticity as the bedrock of their brand, not a constraint to be worked around.

Frequently Asked Questions

Why are people still using AI for content if the risks are so high?

The primary driver is the intense pressure to maintain high-volume output in an attention-starved economy. AI offers a massive reduction in cognitive load and production time, which many businesses view as essential for staying competitive. The risk is often ignored or underestimated because the immediate gratification of efficiency is more palpable than the long-term, abstract threat of brand erosion or legal liability.

How can I tell if a piece of content was created by AI?

While AI is becoming more sophisticated, it often leaves "tells" such as repetitive sentence structures, a lack of specific, unique anecdotes, and a tendency to hedge opinions with overly neutral language. Advanced detection tools can flag synthetic patterns, but the most reliable method remains human editorial review, which looks for the nuanced "lived experience" and logical consistency that models—even advanced ones—still struggle to replicate authentically.

What is the "Innovation Trap" in AI content creation?

The "Innovation Trap" occurs when a business prioritizes the adoption of new AI tools for the sake of appearing cutting-edge, while simultaneously sacrificing safety, accuracy, and human oversight. Organizations trapped here mistake speed for progress. They often push content out at scale without proper vetting, leading to a degradation of brand trust that far outweighs any short-term gains in production velocity.

How does AI-generated content affect my brand's long-term trust?

Trust is built through consistency and human accountability. When a brand relies heavily on AI-generated content, it risks appearing hollow or disconnected. If an audience detects that the content lacks a human voice or, worse, contains inaccuracies, the brand’s credibility suffers. Over time, an over-reliance on synthetic content can lead to "brand dilution," where the audience no longer sees the company as an authority, but as a source of automated noise.