Preventing Problems with AI-Powered Solutions

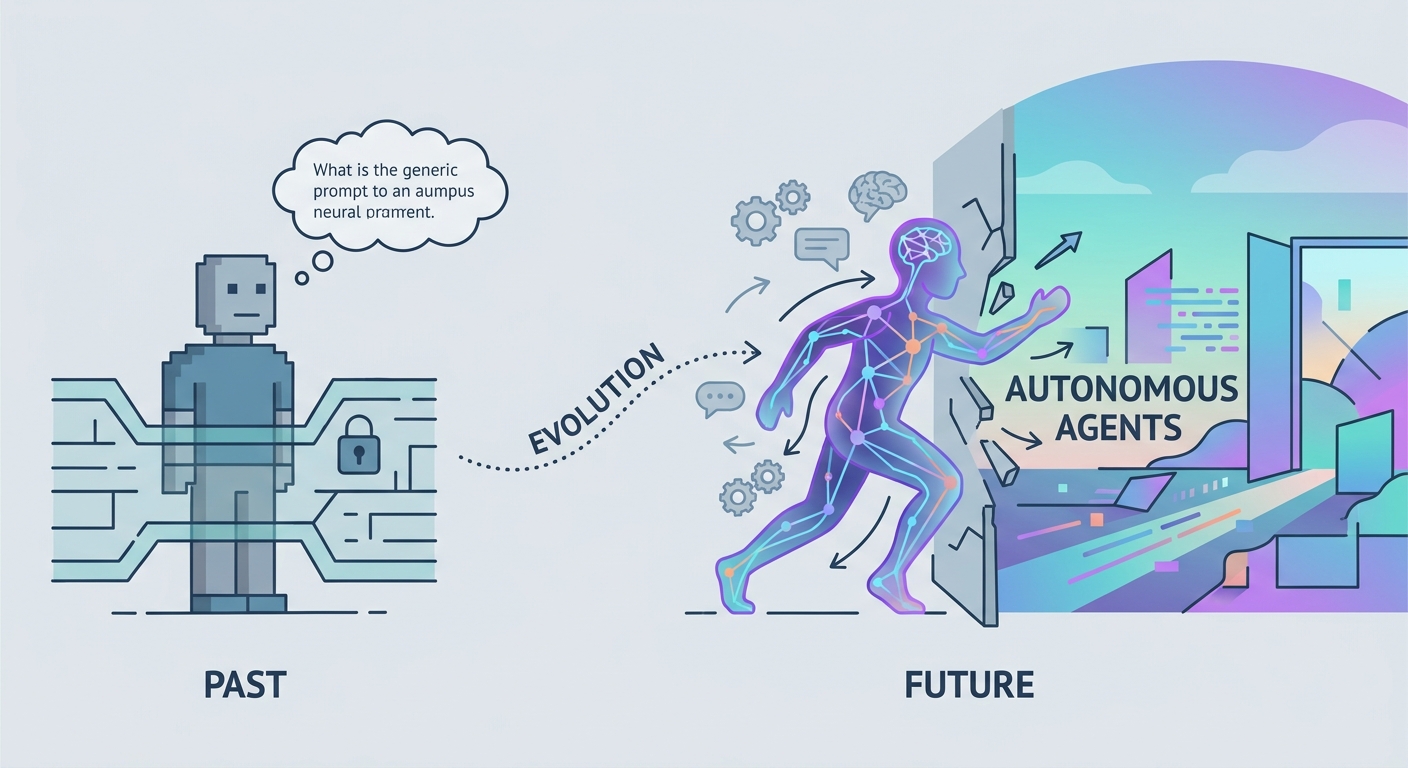

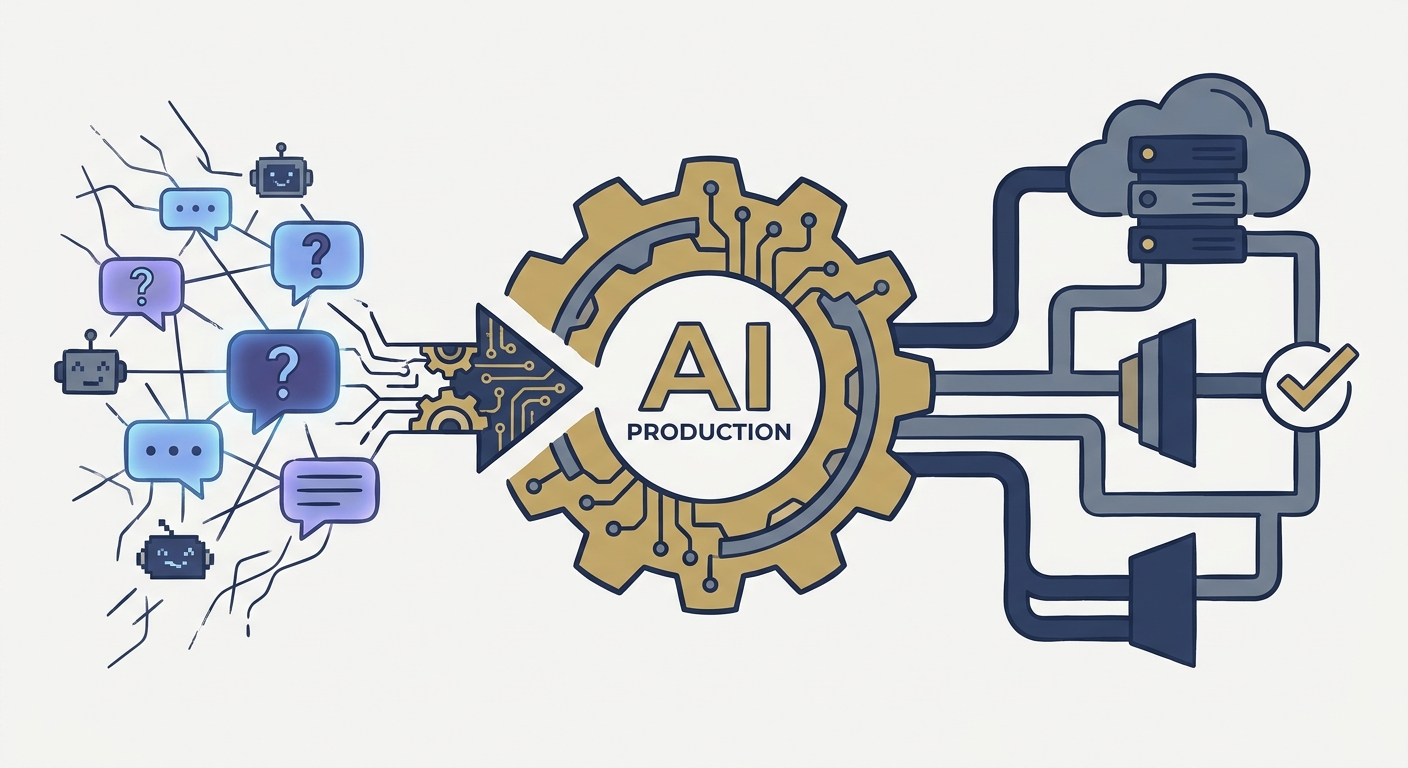

The honeymoon phase of AI is officially over. Remember 2023? When everyone was just playing around with chatbots, treating them like magic tricks? That’s done. We’ve hit 2026, and the industry has shifted from "playing with prompts" to deploying agentic systems that actually move the needle on production.

But there’s a catch. Moving to production has exposed a cold, hard truth: your AI stack is only as sturdy as its weakest architectural link. According to the International AI Safety Report 2026, over 50% of enterprises now view AI-related risks as a top-tier operational threat. We’re talking about risks on par with a total cloud infrastructure collapse.

Security isn't a checkbox for the IT department anymore. It is the fundamental requirement for any business that wants to survive in an AI-first economy. If you aren't building for resilience, you aren't building for the future—you're just waiting for a headline-grabbing disaster.

What’s Lurking Under Your AI Workflow?

We get blinded by the shiny features. We see the efficiency gains and forget that we’re essentially opening up our digital front doors to a new, unpredictable attack surface. You aren't just adding a tool; you're adding an agent that acts, decides, and interacts with your most sensitive data.

1. The Data Leakage Trap

When you fine-tune a model on your own data, the line between "confidential asset" and "training set" gets blurry fast. If you don't have ironclad governance on your ingestion pipelines, you’re basically inviting proprietary code and PII to leak into model weights. We’ve broken down how to plug these holes in our guide on Data Security Best Practices.

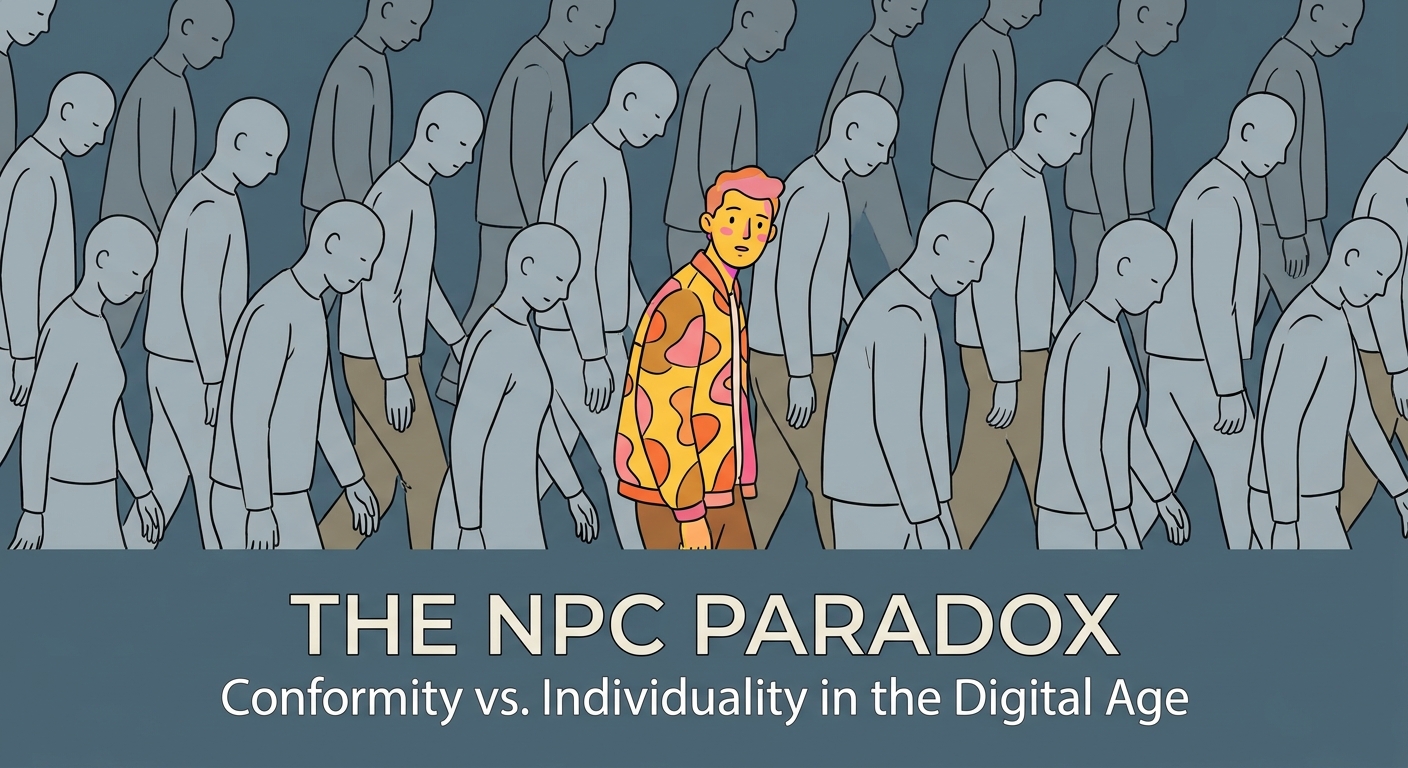

2. The Rise of Shadow AI

This is the silent killer. Your employees are smart, and they’re impatient. When IT blocks their access to a tool they need, they’ll find a way around it. They’ll sign up for unauthorized AI services to "just get the job done." Suddenly, your company’s sensitive data is being fed into third-party servers with privacy policies that nobody in your legal department has ever read. It’s an IT manager’s nightmare, and it’s happening on your network right now.

3. Model Drift: The Silent Decay

Many teams treat AI like a toaster: plug it in, set the temperature, and walk away. That’s a mistake. AI models degrade. As the world changes—and your business data evolves with it—the patterns your model learned last month become stale. You start seeing compounding errors in automated decisions, and before you know it, your "intelligent" system is just expensive, automated noise.

4. The Accountability Void

If an autonomous agent makes a decision that costs you a million dollars or triggers a regulatory fine, who’s holding the bag? Is it the model vendor? The engineer who fine-tuned it? The user who clicked "run"? Without a clear governance framework, you’re just begging for a legal quagmire.

Neutralizing Shadow AI: Stop Banning, Start Governing

You can’t secure what you can’t see. If you try to play whack-a-mole by banning every AI tool, you’ll lose. Your employees will just find smarter ways to hide them. Instead, shift your mindset: provide a safe, sanctioned path for the innovation they’re clearly craving.

Start by mapping your network traffic. Look for the footprints of AI tools in your endpoint logs. Once you see what’s being used, move to the "Policy Review" phase. If a tool is actually valuable, don't kill it. Bring it into the fold. Provision it through an enterprise-grade API gateway where you can control the data flow. If it’s a security liability, block it—but always, always replace it with a secure alternative. If you don't offer a better way, you’re just driving the behavior further into the shadows.

Stopping Model Drift Before It Destroys You

Model drift isn't a bug; it’s an inevitability. If you aren't monitoring for it, you’re ignoring a slow-motion business continuity crisis.

For a deep dive into how these systems fail, check out 9 AI Risks and How to Mitigate Them. The secret to survival is building "performance triggers" into your pipeline. Stop relying on static, manual checks. You need automated evaluation loops that compare your AI’s outputs against a "ground truth" dataset in real-time. If the accuracy dips below a certain threshold? The system should automatically alert a human or, better yet, kick off a retraining sequence.

The Human-in-the-Loop Framework

Not every decision needs a human hand on the wheel. But the high-stakes ones? They absolutely do. The biggest mistake organizations make is applying a "one-size-fits-all" oversight policy. It either grinds innovation to a halt or leaves you wide open to disaster.

Categorize your workflows. If it’s a low-risk, high-frequency task—like summarizing a meeting transcript—let it run wild. Automate it. But if it involves legal, financial, or safety implications, you need a mandatory "Human-in-the-Loop" gate. By explicitly drawing these lines, you aren't just protecting the company; you're creating a clear audit trail that proves to regulators that you’re still in charge of your systems.

Building an Accountability Chain

Who is responsible for an agent's actions? You are. But that doesn't mean you have to do it alone. You need to clearly delineate the roles of your external vendors and your internal engineering teams. As the AI Security and Governance Guide 2026 points out, your governance rests on three pillars: vendor transparency, strict model version control, and comprehensive audit logging.

Every decision an agent makes needs a paper trail. What data did it use? Which model version did it run? Who signed off on the output? If you can’t trace the provenance of a decision, you can’t perform a root-cause analysis when things go south. And let’s be honest: things will eventually go south.

Conclusion: Resilience is Your Competitive Advantage

Resilience isn't the enemy of innovation. It’s the engine that makes innovation sustainable. When you take control of your Shadow AI, monitor for drift, and enforce real accountability, you turn a high-risk experiment into a reliable, scalable growth machine.

If your team is struggling to bridge the gap between "we're testing this" and "this is a secure, mature system," our AI Consulting Services are built to help you lay that foundation. Don't wait for a high-profile failure to wake up. Start architecting your resilience today.

Frequently Asked Questions

How do we distinguish between "AI innovation" and "Shadow AI" in our workplace?

Innovation happens within a managed, transparent, and secure environment. Shadow AI is defined by a lack of visibility and control. If a tool is being used to process company data without IT oversight, it is Shadow AI, regardless of how "innovative" the use case is. Establish a clear policy threshold: if a tool touches sensitive data, it must be vetted, approved, and integrated into your managed stack.

If an AI model makes a biased or illegal decision, who is legally responsible?

Legally, the responsibility typically rests with the entity that deployed the system. This is why the "Accountability Chain" is vital. By maintaining rigorous audit logs and ensuring that high-stakes decisions have a human-in-the-loop, you demonstrate due diligence. Your vendor contracts should also clearly define liability for model performance and data handling practices.

What is "model drift," and how can we detect it before it affects our customers?

Model drift is the degradation of an AI model's performance over time due to changes in the underlying data distribution. You detect it through continuous, automated performance monitoring. By comparing real-time model outputs against a gold-standard dataset, you can identify performance decay early and trigger an automatic retraining or human review process before the drift impacts your production environment.

Does our existing SOC2 or ISO compliance cover AI-powered systems?

Existing frameworks like SOC2 and ISO provide a necessary foundation, but they are generally insufficient for the unique risks posed by AI. While you should leverage these frameworks, you must add AI-specific controls—such as prompt injection testing, model provenance logging, and bias auditing—to ensure full compliance and security for your AI-powered systems.