Understanding Generative AI for Visual Content Development

TL;DR

- Shift from simple prompt-based AI to sophisticated Agentic Design workflows.

- Use AI agents to handle repetitive grunt work and technical asset refinement.

- Build an integrated orchestration layer to ensure brand and project alignment.

- Increase creative leverage by focusing on high-level strategy and human oversight.

The era of typing a prompt, hitting "generate," and praying for gold is dead. If you’re still treating AI like a glorified slot machine for stock photos, you aren’t just behind the curve—you’re actively bleeding money.

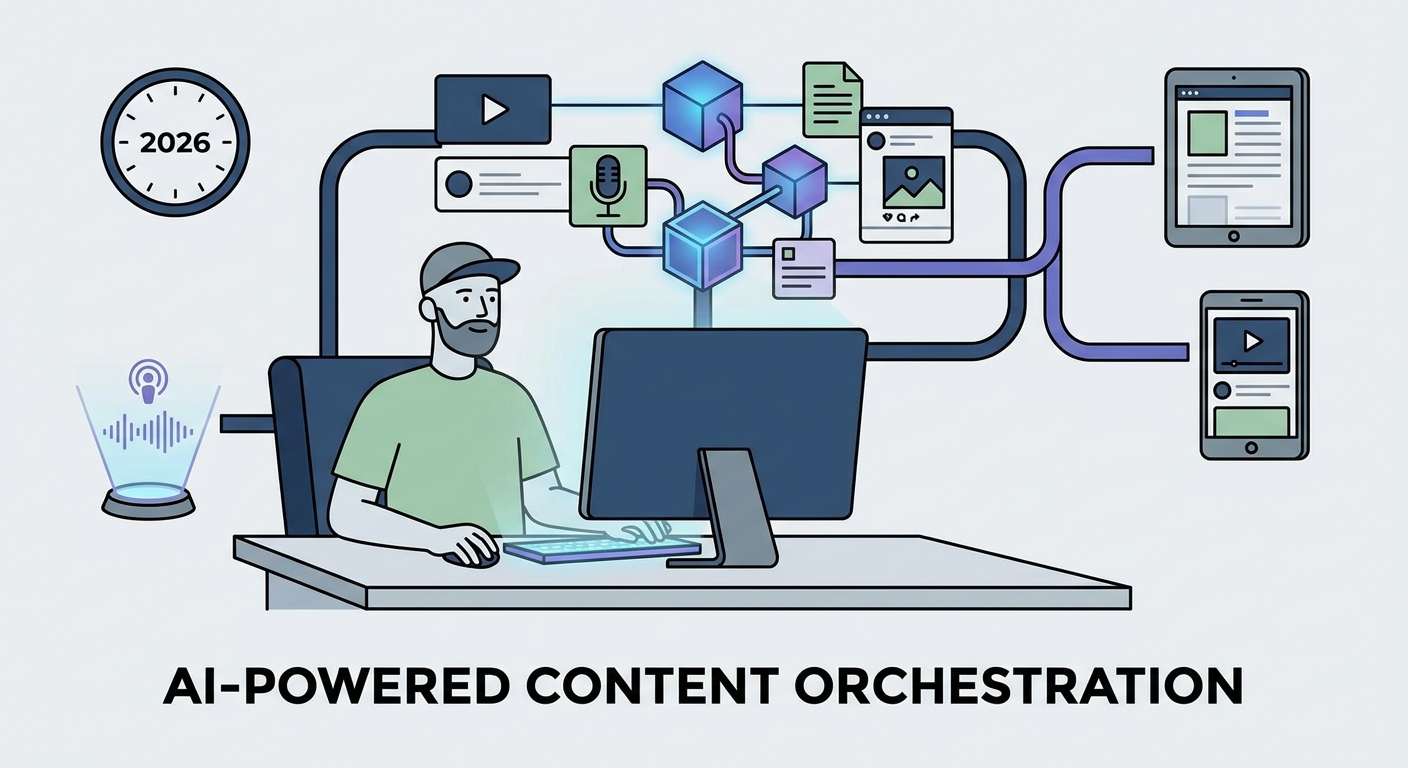

By 2026, the novelty has worn off. Nobody cares that you made a cool picture with a chatbot. Brands are now demanding utility, strict brand alignment, and high-ROI execution. We’ve moved past the "magic trick" phase of Generative AI and entered the age of Agentic Design.

It’s time to stop babysitting your tools and start building an orchestra.

Beyond the Hype: The Rise of Agentic Design

For years, the industry was obsessed with text-to-image prompts. It was a parlor trick. Sure, it could spit out a surreal portrait or a sunset, but it lacked the structural integrity required for professional work. As the MIT Technology Review's analysis of Generative AI points out, we are witnessing a critical shift. We are moving from "tools" to "agents."

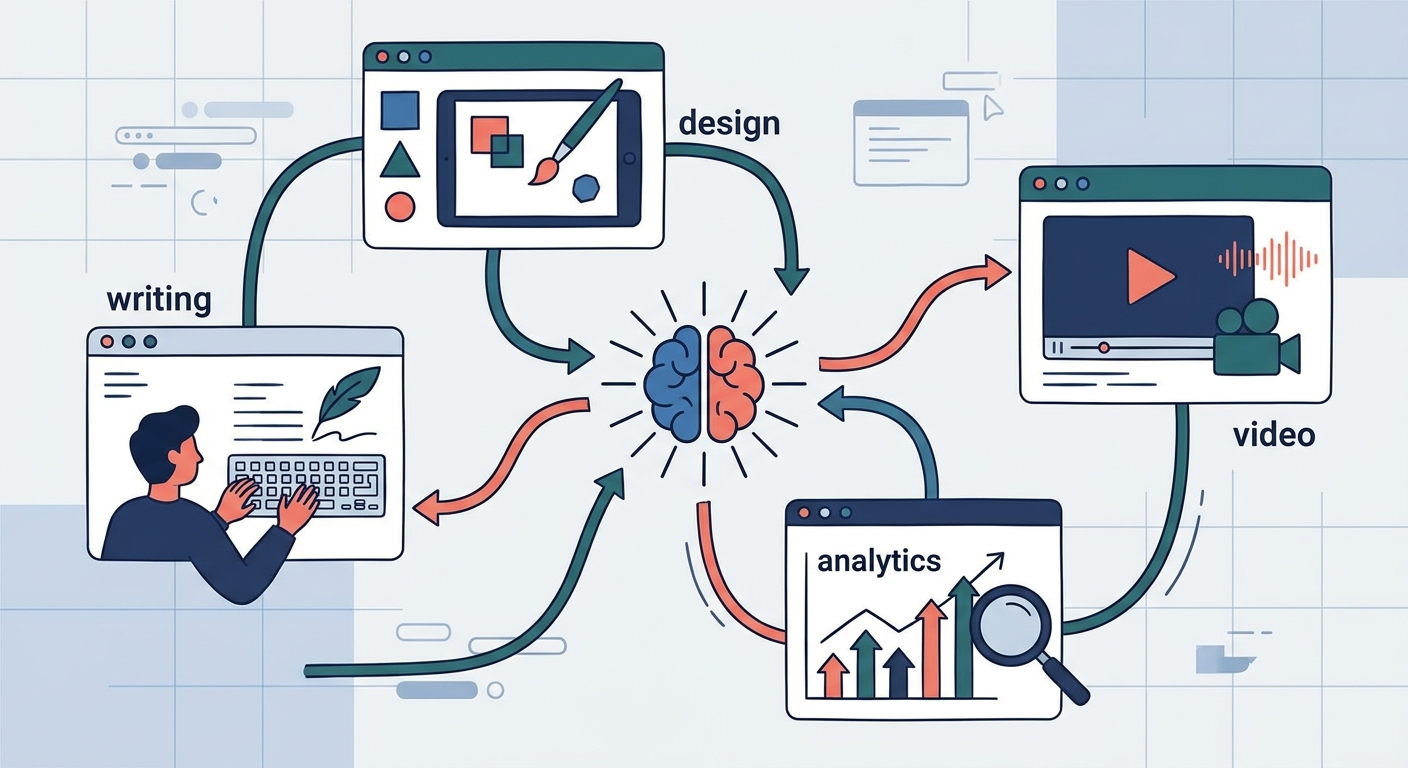

An agent isn't just an app you open. It’s a member of your creative team. It knows your brand guidelines by heart. It retrieves project briefs. It executes across formats without needing you to hold its hand through every pixel-pushing step. While a basic generative tool sits there waiting for instructions, an agentic system acts. It asks the right questions: “Which audience segment are we targeting?” or “Does this composition actually meet our accessibility standards?”

That is the difference between a toy and a production engine.

What Does "Agentic AI" Mean for Your Creative Workflow?

Let’s be honest: your current workflow is a bottleneck. Designers spend 60% of their time on "grunt work"—resizing assets for social, color-grading for different displays, and fighting with file hierarchies.

Agentic AI flips the script. It handles the technical heavy lifting, leaving the human creative to do what they’re actually paid for: curation, strategy, and that final, vital touch of human intuition.

Think of it as a supervisor relationship. You set the vision and define the constraints. The AI handles the iteration, the asset generation, and the boring compliance checks. This isn't about replacing the artist; it’s about giving the artist the leverage to produce ten times the output without burning out.

How Do You Build a Full-Stack Visual Pipeline?

The biggest mistake teams make? Fragmented toolchains. You need an ecosystem where the creative brief is the source of truth, feeding into an orchestration layer that triggers text, vector, and image generation simultaneously.

By bridging the gap between strategy and execution, you kill "design drift." When your orchestration layer knows that a LinkedIn banner has to match the visual language of your homepage, you achieve a level of consistency that used to require a massive, exhausted staff.

Why "Human-in-the-Loop" is the New Quality Standard

We are drowning in "AI Slop." You know the look: generic, soulless, plastic images that make your brain itch. Fatigue is setting in, and audiences are getting smarter. They can smell an unedited AI image from a mile away.

To survive this, adopt the 80/20 rule: 80% automation for the heavy lifting, 20% human expertise for the final polish.

Maintaining your brand’s "DNA" requires more than just a style prompt. Check out our guide to brand consistency to see how top-tier teams are using fine-tuned models—trained on their own proprietary assets—to ensure their work never looks like a generic stock image. The human is no longer the laborer; they are the curator, the editor, and the final arbiter of taste.

Visual Trust and Provenance

In a world of deepfakes, visual trust is your most valuable currency. If you can't prove where your assets came from, you're going to lose your audience's confidence.

The Adobe Content Authenticity Initiative is leading the way here with "Content Credentials." Think of it as a digital signature that attaches metadata to your assets, proving how they were created and what—if anything—was touched by AI.

Transparency isn't just a compliance headache; it’s a competitive advantage. When your audience knows your content is ethically produced, they engage. Brands that hide their AI use are viewed with suspicion. Brands that embrace provenance build trust.

Hyper-Personalization at Scale

Static content is a dead weight in a dynamic market. In 2026, the best campaigns are data-driven, morphing in real-time based on user behavior.

Imagine a hero image on your site that shifts its color palette or composition based on the user’s region or browsing history. This is the promise of dynamic visual development. As we break down in the future of marketing automation, the goal is to shift from "one-size-fits-all" to "one-size-fits-one."

Navigating the Legal Minefield

The legal status of AI is still a volatile frontier. According to the Stanford HAI: AI Index Report 2026, copyright often comes down to how much "human creative control" was involved. If you just type a prompt and take the output, good luck protecting that asset. If you use AI as a component in a larger, human-directed process, you’re in a much stronger position.

Then there’s the data issue. Using models trained on scraped, copyrighted data without attribution is a ticking time bomb. Smart companies are moving toward "walled garden" AI models—private environments where the training data is fully licensed and audited. If you aren't auditing your training sets, you’re inviting a lawsuit.

Case Study: The Transformation

Let’s look at a mid-sized marketing firm from 2024. Their process was agonizingly linear: a copywriter writes a brief, a designer waits for copy, then spends days creating assets, followed by endless feedback loops and manual resizing. Everything was "hand-crafted"—and everything was slow.

By late 2026, that same firm went agentic. Now, the brief is ingested by an orchestrator. It generates the core concept, creates base assets using fine-tuned style LoRAs, and automatically exports them into 40+ variations. The design team? They spend their time on high-level creative direction, tweaking the AI’s output to keep the brand voice sharp.

The result: A 400% increase in volume and a 60% reduction in production time. Higher quality. Less stress.

Future Outlook: What’s Next?

The next 18 months will be defined by the democratization of 3D asset generation and real-time video. We’re moving toward a world where a flat 2D concept can be instantly extruded into a 3D environment or turned into high-fidelity video with a single command.

The barrier to entry is collapsing. The brands that win won't be the ones with the most expensive tools; they’ll be the ones that best orchestrate these tools into a coherent, brand-aligned system.

The "AI-only" era is dead. The "Agentic-Human Partnership" era has arrived. Welcome to the future.

Frequently Asked Questions

How do I ensure my AI-generated visuals stay on-brand?

You must move beyond generic prompting. Use custom fine-tuned models (LoRAs or Dreambooth) trained specifically on your brand’s existing assets. These adapters lock in your visual guidelines—color palettes, typography, and stylistic nuances—ensuring that the AI operates within your established creative guardrails.

Is AI-generated visual content considered copyrightable in 2026?

Copyright law remains complex. Generally, raw AI output is not copyrightable. However, if you integrate AI-generated assets into a composition that you have significantly modified, arranged, or edited, the resulting work is often eligible for protection. Always maintain a "human-in-the-loop" record of your iterative design process to prove the creative contribution.

What is the difference between "Generative AI" and "Agentic AI" in design?

Generative AI is a tool—it waits for a prompt to produce an output. Agentic AI is a system—it is goal-oriented. It understands the project, breaks it down into actionable tasks, manages the workflow, and executes across multiple stages, effectively acting as an autonomous member of your team rather than just a software utility.

How do I avoid the "uncanny valley" or generic look in AI visuals?

Avoid "one-shot" generation. The generic look comes from accepting the first output. Use iterative refinement: generate a base, edit the composition, use "inpainting" to fix specific details, and finally, pass the image through a human-led post-production phase to inject the "imperfections" and nuances that define authentic, human-made design.