AI Filters Sparking Trends in Digital Content Creation

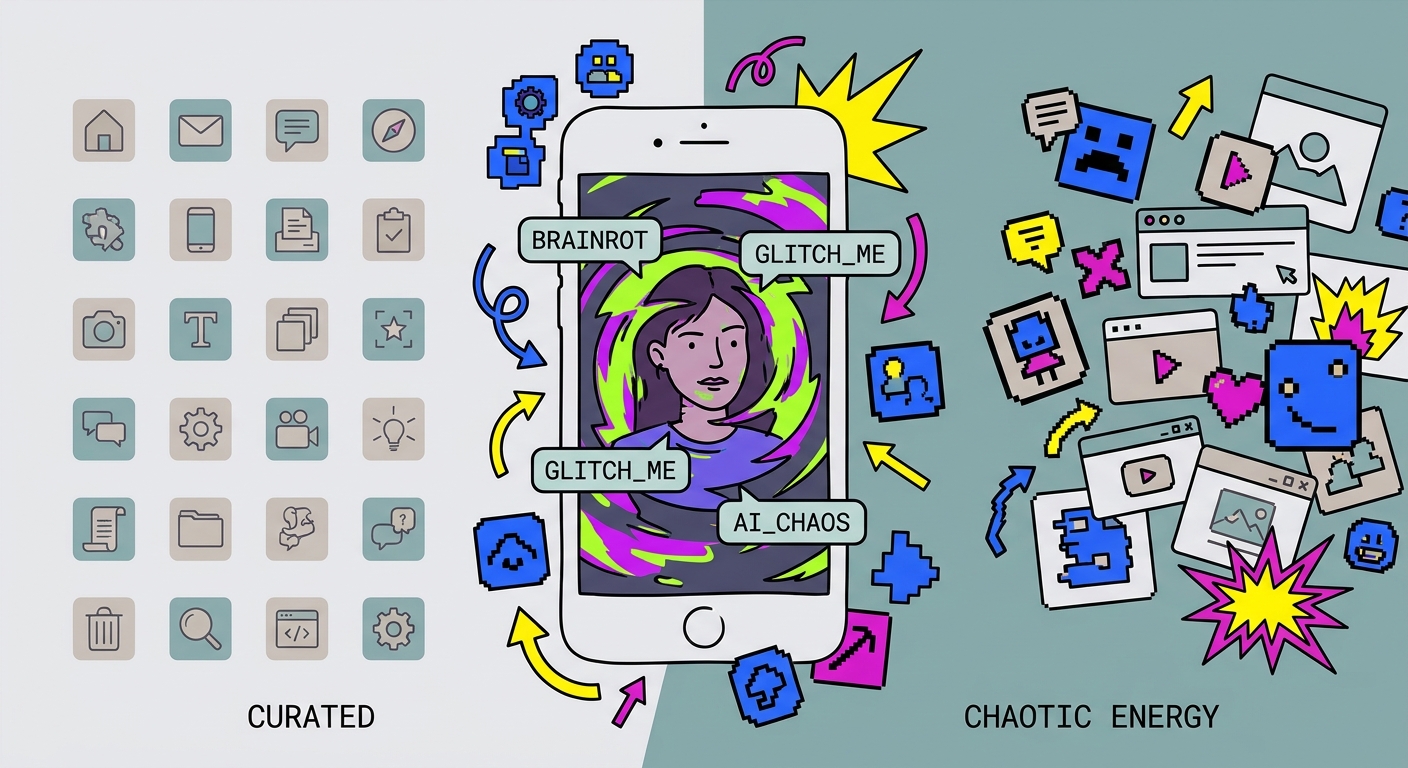

The era of the "perfect" Instagram feed is officially dead. You know the one: the perfectly curated, studio-lit, soul-crushingly polished aesthetic that defined the early 2020s. We’ve moved past that. In 2026, the digital landscape has shifted into something louder, weirder, and—dare I say—more human.

We aren’t hunting for corporate perfection anymore. We’re hunting for personality. We want kinetic energy. We want that specific brand of "brainrot" humor that makes you stop scrolling mid-sip, look at your phone, and wonder, What did I just watch?

AI filters have evolved. They aren't just for smoothing out your skin or changing your hair color anymore; they are the engines of modern storytelling. They turn static, boring snapshots into living, breathing, chaotic masterpieces. As noted in The State of AI in 2026, the game has changed. Success no longer goes to the person with the most expensive lighting kit. It goes to the person who uses these tools as a megaphone for their own unfiltered, unique voice.

The Rise of "Brainrot" and the Death of Airbrushing

Spend five minutes on TikTok or Reels, and you’ll see it: content that feels gloriously unhinged. This is the "brainrot" aesthetic. It’s high-intensity, meme-dense, and intentionally jagged. It’s a middle finger to the airbrushed, unattainable lifestyle influencers of five years ago.

Users are flocking toward creators who embrace the glitch. We’re seeing Chibi-style transformations, surreal human-pet hybrids, and edits that look like they were put through a blender.

Is it silly? Sure. But look at the psychology behind it. When a creator takes their own likeness and runs it through an AI filter that turns them into a glitch-art masterpiece, they’re inviting the audience into an inside joke. It’s vulnerable in a strange, digital way. According to the CyberLink AI Trend Guide, these visual shake-ups spike retention rates because they shatter the pattern of the feed. When every other post is a polished, boring ad, your AI-distorted, high-energy chaos is the only thing that makes a thumb stop scrolling.

Building a Viral Workflow: The Two-Tier System

The secret to staying relevant isn’t just picking a random filter and hoping for the best. It’s about building a workflow that keeps your output consistent. The heavy hitters operate on a simple two-tier system: they use Large Language Models (LLMs) to nail the creative direction, then feed those concepts into image-to-video engines.

Stop guessing. Use an LLM to "prompt-engineer" your vision. Describe the lighting, the mood, the vibe, and the specific aesthetic. Once you have that prompt dialed in, push it through an image-to-video engine. This synergy is the backbone of modern content creation workflows. It turns a static thought into a dynamic visual in minutes rather than hours. The goal? Make it feel native. Make it feel alive.

Why "Motion-First" is the Only Way Forward

We are living in a "Motion-First" world. If your content is a static image, you’re basically posting a dead end. The 2026 algorithm doesn't care about your high-resolution photography if it doesn't move.

Platforms are aggressively prioritizing content that holds the eye. Whether it’s a subtle parallax shift, a full-blown AI animation, or a weird "squish" effect on a portrait, movement signals to the algorithm that you’re worth watching.

This has raised the bar. As discussed in AI Image Generation Best Practices, the question isn't "Can you make a cool image?" anymore. It’s "Can you tell a story that moves?" If your content stands still, you’re invisible. Period. The jump from static to motion is the difference between 500 pity views and hitting the Explore page.

Steal These Prompts

Want to start today? I’ve put together three templates. Take these, tweak them, and test them.

Category A: Human-to-Chibi (Brand Mascots)

- Prompt: "Convert the provided high-resolution portrait into a 3D-rendered Chibi-style mascot. Maintain the expression and hairstyle, but simplify features, add soft glow lighting, and place against a solid, vibrant pastel background. High contrast, clean lines, Pixar-inspired texture."

Category B: "Brainrot" Aesthetic (Short-Form Video)

- Prompt: "Apply a chaotic, high-energy filter to the video. Add erratic frame-skipping, vibrant color-cycling, and subtle 'squish' distortions during the punchline. Style: 2000s internet nostalgia mixed with modern vaporwave. Focus on exaggerated facial reactions."

Category C: Anthropomorphic Pet Transformations

- Prompt: "Transform the pet in the video into a humanoid version wearing a business suit. Keep the eyes and fur texture realistic but animate the body to perform a human gesture, like drinking a coffee or typing on a laptop. Cinematic lighting, photorealistic, 4k render."

Know Your Platform

Don’t dump the same content everywhere. You have to speak the language of the platform.

- TikTok/Reels: This is your playground for chaos. Go fast. Go hard. Use those "brainrot" filters. The audience wants a laugh, and they want it five seconds ago.

- LinkedIn: The rules change. Use "Hyper-Personalized" avatars here. You want a professional headshot that feels tech-forward and innovative, not a cartoon. It shows you’re ahead of the curve without sacrificing your authority.

| Platform | Recommended AI Style | Primary Goal |

|---|---|---|

| TikTok/Reels | High-Motion, Meme/Brainrot | Viral Reach & Humor |

| Professional/Hyper-Personalized | Authority & Thought Leadership | |

| Aesthetic/Atmospheric | Brand Identity |

The Authenticity Paradox

Here’s the thing: people are scared that AI makes content "fake." But if you use it right, AI actually makes your content more human.

The most successful creators in 2026 are the ones practicing the "Authenticity Paradox." They use AI to amplify their personality, not to hide behind a mask of perfection. My advice? If you’re using heavy AI, just be cool about it. A small label or a quick mention in the caption goes a long way. Your audience doesn't care if you use tools—they care if you lie to them. Use AI as a tool to show who you are, not to hide who you are.

Finding the Next Big Wave

Want to be a trendsetter? Stop looking at the top of the feed. The top is where trends go to die. Start looking at the edges. Dig into niche Discord communities where the early adopters are playing with beta tools. Watch the "velocity" of a filter. How fast are people remixing it? The next big thing usually starts as a "meme-within-a-meme" deep in a subreddit. If you can translate that weird niche energy into something accessible, you win.

The Future is Yours

The barrier to entry has officially collapsed. You don’t need to be a technical editor anymore. You need to be a director. The primary skill of 2026 is prompt engineering—the ability to articulate a vision and guide the machine to build it.

If you experiment with these workflows, you aren't just keeping up; you’re setting the pace. As we keep tracking how AI is changing social media marketing, remember: the tech will shift, but the need for a human story never will.

The feed is waiting. What are you going to show them?

Frequently Asked Questions

Do I need professional design skills to use these AI filters?

No, modern AI tools utilize prompt-based generation—you describe what you want, and the AI builds it. You act as the director, not the digital artist.

Are these AI trends permanent, or do they fade quickly?

Most AI filters are viral "micro-trends" that last a few weeks; the key is to adopt them early to maximize reach while the trend is at its peak velocity.

Can I use these AI-generated images for commercial projects?

It depends on the tool's license; always check if the platform allows commercial use of generated assets before publishing for a brand.

Why is "Image-to-Video" better than just static AI images?

Motion-driven content significantly increases engagement rates and platform algorithm favorability compared to static imagery in 2026, as it effectively captures and holds user attention.