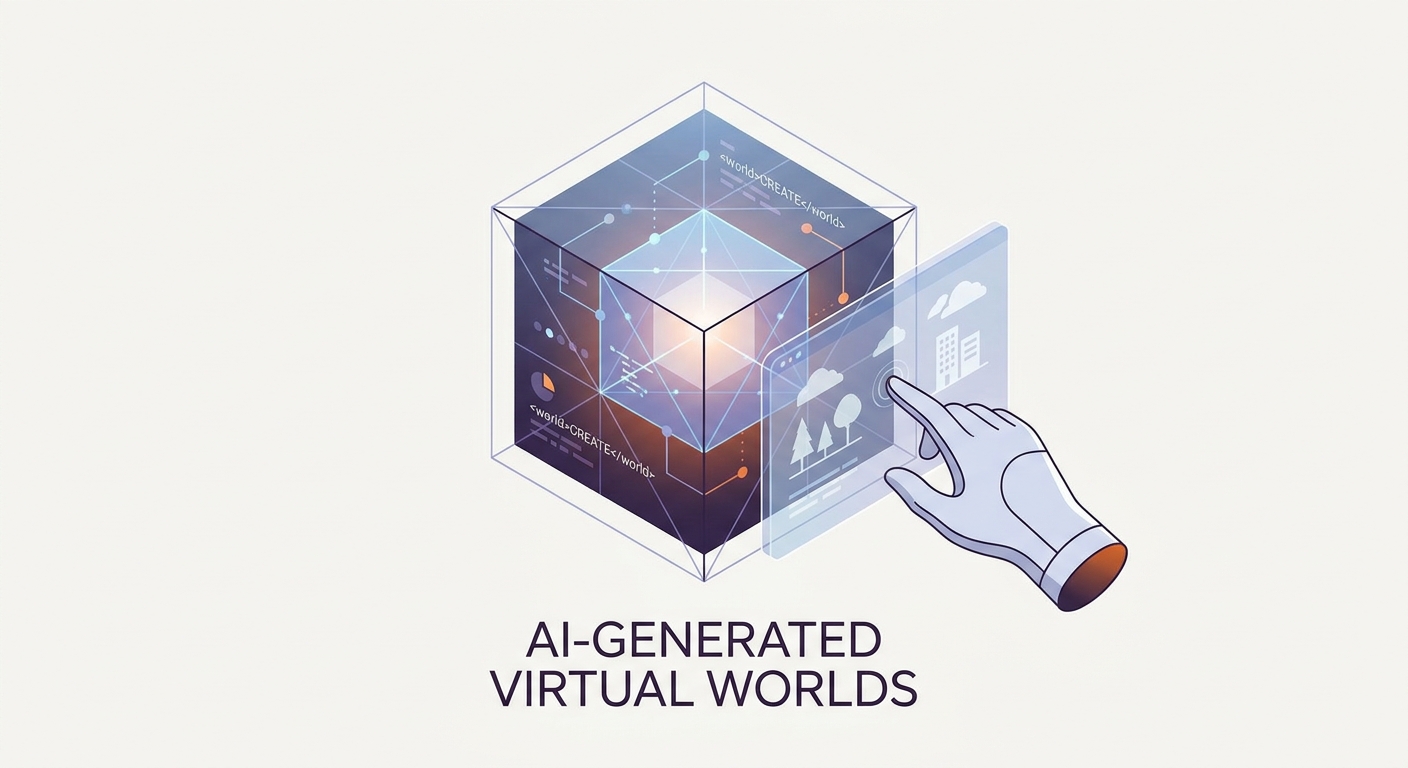

AI-Generated Virtual Worlds: A New Sandbox for Creativity

The barrier between a fleeting thought and a fully realized, interactive 3D environment? It’s gone. In 2026, we’ve moved past the era of flickering, glitchy AI video loops. We are now stepping into responsive, persistent, and physics-aware "World Models."

This is the biggest shift in digital creation since the 3D engine first hit the scene. We’re finally ditching the soul-crushing grind of manual vertex manipulation in favor of high-level creative direction. Whether you’re a solo dev or part of a mid-sized studio, mastering this new sandbox isn't just a "nice to have"—it’s the new baseline. If you’re still getting your bearings, our AI-Powered Content Creation Guide breaks down exactly how these generative workflows are tearing up the old rulebook.

The Dawn of the World Model: Why 2026 Changed Everything

For years, generative AI was stuck in the "text-to-video" bottleneck. Remember those days? A character would walk into a room, turn their head, and suddenly morph into a chair because the AI couldn't track object permanence to save its life.

That era is dead.

2026 is the year of the World Model. This architecture doesn't just "paint" pixels; it understands spatial logic and Newtonian physics as core, intrinsic properties. We’ve moved past the "Game Engine as a Fortress" mentality. Historically, building a virtual space meant suffering through C++, fighting with lighting pipelines, and enduring the endless, gray-boxing slog.

Today, the engine is just a canvas. You don't "code" a world anymore. You describe the parameters of existence, and the model handles the heavy lifting of spatial synthesis in real-time. The "blank page" problem? Solved. You aren't starting with a cube and a light source; you're starting with a vision.

How Do These Models Actually Build Reality?

The secret sauce is "Physics-by-Prompting."

Instead of manually defining collision boxes for every single asset, the model infers the physical properties of an object based on its identity. Tell the AI to build a "heavy stone bridge over a turbulent river," and it just knows. It understands mass. It understands resistance. It calculates the fluid dynamics of the water without you writing a single line of physics code.

This pipeline transforms your intent into a navigable space. The Latent World Model acts like the "brain," predicting the next state of the environment, while the physics engine ensures that when you touch an object, it reacts with the weight and trajectory you’d expect.

Which Tools Are Leading the Field in 2026?

The leaderboard right now is defined by three things: Latency, Fidelity, and Persistence.

Google DeepMind Genie 3 is the current technical heavyweight. By training on massive datasets of actual gameplay, this model has figured out how game mechanics function under the hood. It doesn't just make things look pretty; it makes them playable. You can discover the technical nuances of how they pulled off this leap in their latest research documentation.

Runway GWM-1 remains the industry gold standard for spatial consistency. While other models struggle to keep a room looking the same once you pivot the camera, GWM-1 hangs onto that fidelity. It’s the go-to for creators who need narrative-heavy, consistent environments. Take a look at their current model capabilities to see how they handle long-form spatial coherence.

Finally, Decart AI Oasis has completely dismantled the hardware barrier. They’ve made high-performance, real-time generation run straight in your browser. No more needing a top-tier RTX-4090 rig to build. It’s immediate, it’s lightweight, and it’s accessible to anyone with a browser. Their platform architecture proves that the future of interactive AI isn't locked behind expensive hardware.

Can AI Worlds Be Used for Professional Game Development?

The question isn't whether AI can replace professional game dev. It’s about how it accelerates the "Professional Pivot."

In the old world, the iteration cycle—concept art to gray-box to prototype—took weeks. With tools like World Labs (Marble), that cycle is compressed into hours. We’re seeing a massive shift toward "AI-Assisted Prototyping." Artists are using AI to generate high-fidelity Gaussian splats and meshes, exporting them straight into Unreal Engine 6 or Unity.

This lets teams "feel" the gameplay loop in an interactive sandbox before a single production asset is finalized. It’s the ultimate speed-to-market advantage. You aren't building the game anymore; you’re curating the experience.

What Are the Limitations of Current Generative Sandboxes?

Let's be real: there’s a gap between "dream-like" state and total persistence.

Current models are incredible at creating cohesive environments while you're in them, but they can get a bit forgetful. If you leave a room and come back, the AI might rearrange the furniture. It’s getting better, but long-term memory is still the final boss for these models.

Then, there are the guardrails. As these sandboxes open up, platforms are leaning hard into "Human-in-the-loop" curation. The AI provides the chaos; the human provides the intent. You still need a pulse to ensure the space is safe, stable, and actually makes sense for your narrative.

How Can You Start Building Your First AI-Native World?

Forget syntax. Start focusing on "intent-based" communication.

- Define the Physics: Clearly state the "laws" of your world in your prompt. (e.g., "low-gravity, underwater environment with bioluminescent lighting").

- Iterate on the Foundation: Use a tool like Decart or Runway to establish the base layout.

- Curate for Coherence: Once you have a base, manually tweak the key elements that define the space.

- Export and Refine: Use professional export pipelines to bring your best generated assets into a standard engine for the final polish.

If you’re ready to dive in, explore our 2026 AI Tool Directory to find the right entry point for your skill level.

The Future of Creative Agency: Are We Still the Designers?

The role of the designer is undergoing a massive metamorphosis. We are moving from "Creator"—the person who lays every brick—to "Curator," the person who envisions the architecture and guides the machine.

Is this a loss of agency? Hardly. It’s an expansion of our reach. By offloading the technical grunt work to the model, we finally have the freedom to focus on what actually matters: the narrative, the pacing, and the emotional resonance of the space. The sandbox is open. The tools are ready. The only constraint left is the quality of your vision.

Frequently Asked Questions

Are these AI-generated worlds persistent, or do they change every time I enter?

Most current models offer "session-based" persistence. While they maintain consistency during your active session, they often regenerate slightly different details if you start a new one. True, infinite long-term persistence is the current "holy grail" of the industry and is being actively solved by newer latent-memory architectures.

Do I need coding skills to build an AI-generated virtual world in 2026?

Not at all. The shift toward natural language prompting and browser-based interfaces means that creative intent is now more valuable than syntax. While understanding the basics of 3D space helps, the model handles the technical heavy lifting of mesh generation and physics.

How do I export my AI-generated environment into a game engine like Unity or Unreal?

Platforms like World Labs (Marble) allow you to export your generated environments as standard 3D file formats (such as .obj, .fbx, or Gaussian splat data). You can then import these into Unity or Unreal to serve as the foundation for your final, production-grade game builds.

What is the fundamental difference between a "World Model" and standard "Text-to-Video" AI?

Text-to-video AI is predictive and passive; it generates a sequence of images that looks like a movie. A World Model is interactive and reactive; it understands the underlying physical rules of the environment, allowing you to move through it, interact with objects, and change the state of the world in real-time.